PhD Student in Systems and Computer Engineering

PhD Student in Systems and Computer Engineering Lecturer in Economic Engineering

Lecturer in Economic Engineering Specialist Data Science

Specialist Data ScienceI am Mathematician passionate about programming, data science, and applied mathematics. Proven expertise in machine learning and data analysis through research and professional roles. Collaborative and leadership skills for creative and effective solutions.

Through my doctoral research, I study how fairness interventions in crime prediction systems affect downstream outcomes across the predictive policing pipeline. I develop data-driven causal inference methods to evaluate the propagation of bias and support ethical, evidence-based decision-making in public safety.

Warning

Problem: The current name of your GitHub Pages repository ("Solution: Please consider renaming the repository to "

http://".

However, if the current repository name is intended, you can ignore this message by removing "{% include widgets/debug_repo_name.html %}" in index.html.

Action required

Problem: The current root path of this site is "baseurl ("_config.yml.

Solution: Please set the

baseurl in _config.yml to "Education

-

Universidad Nacional de Colombia, Bogotá D.C., Colombia.Department of Systems and Industrial Engineering

Universidad Nacional de Colombia, Bogotá D.C., Colombia.Department of Systems and Industrial Engineering

Ph.D. StudentJune. 2022 - present -

Universidad Nacional de Colombia, Bogotá D.C., Colombia.Department of Mathematics

Universidad Nacional de Colombia, Bogotá D.C., Colombia.Department of Mathematics

B.Sc. in MathematicsFeb. 2016 - Jun. 2021 -

Universidad Distrital Francisco José de CaldasDepartment of Engineering

Universidad Distrital Francisco José de CaldasDepartment of Engineering

B.Eng. in Geodesic EngineerFeb. 2011 - Jun. 2016

Experience

-

Lecturer – Universidad Nacional de ColombiaLecturer in Economic EngineeringJun. 2024 – Present

Lecturer – Universidad Nacional de ColombiaLecturer in Economic EngineeringJun. 2024 – Present -

Rappi Latin AmericaSpecialist Data ScienceNov. 2021 – Present

Rappi Latin AmericaSpecialist Data ScienceNov. 2021 – Present -

NUVUData ScienceMar. 2020 – Oct. 2021

NUVUData ScienceMar. 2020 – Oct. 2021

Honors & Awards

-

Honorable Mention at the Colombian National Programming Olympiad.2017

Selected Publications (view all )

Quantifying Fairness in Spatial Predictive Policing

Diego Hernández, Cristian Pulido, Francisco Gómez

Submitted to Artificial Intelligence and LawMajor revisions Spotlight

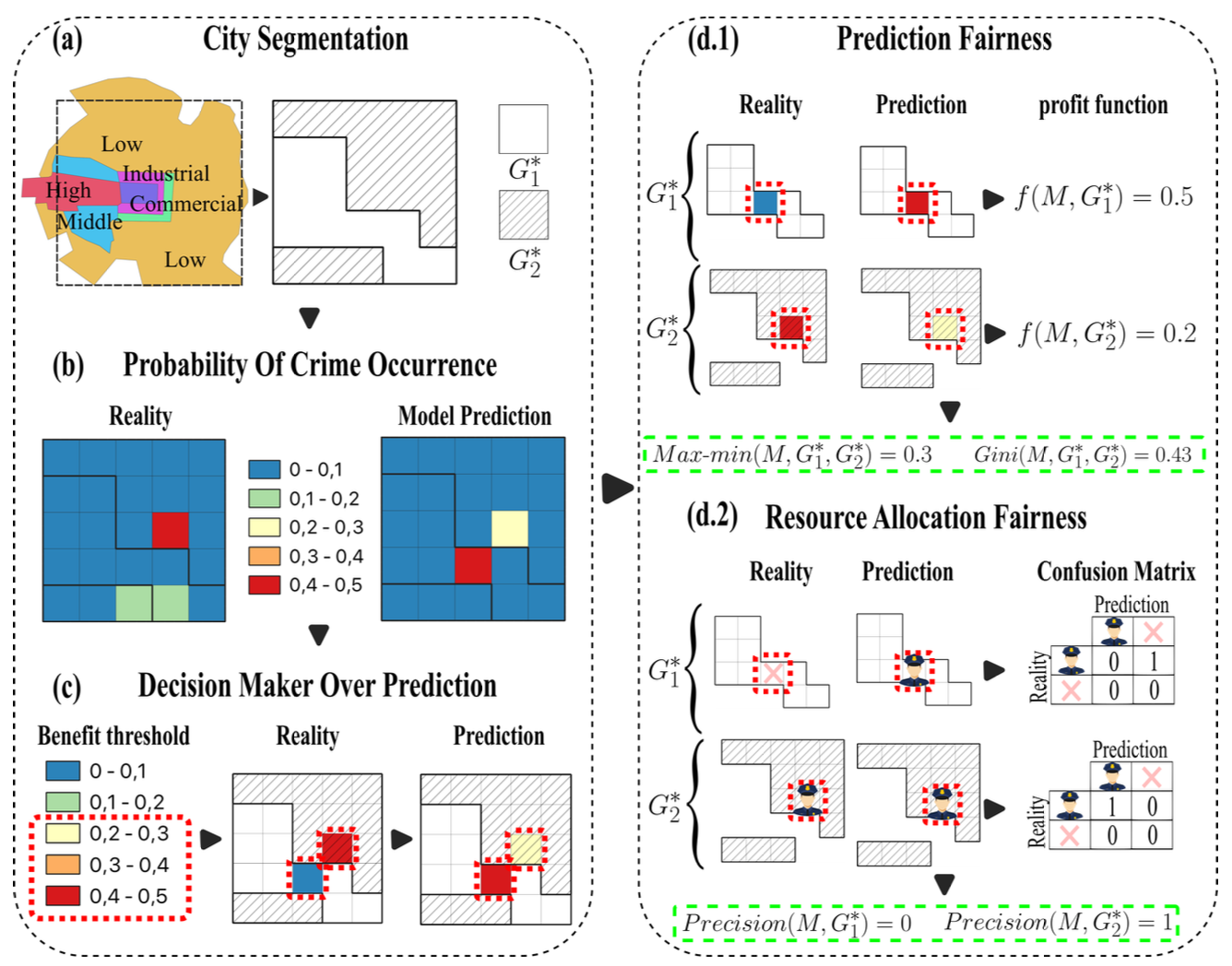

Predictive policing leverages data-driven models to anticipate future criminal events and guide law enforcement strategies. However, concerns about algorithmic fairness have emerged, as these models risk perpetuating discrimination and inequities, particularly among vulnerable populations. While prior research has acknowledged the influence of disparities in crime reporting levels on these models, the extent of their impact on vulnerable populations remains insufficiently understood, posing a critical challenge in societies marked by high disparities. This study seeks to quantify the fairness of three prevalent density probability estimation models used in predictive security. Specifically, it examines their capacity to distribute benefits impartially across diverse populations in various spatial contexts. Real-world theft data were employed to calibrate three distinct predictive models, followed by the quantification of model fairness through two different measurement approaches. These measurements assess disparities in the granting of model benefits between two geographical areas-one focusing on the average prediction error benefit and the other on the utilization of the model for resource allocation. Results suggest that the predictive security models studied may be fair for the prediction but unfair over the use of the model for patrol allocation, with a maximum difference between the means of groups of 45% and an average of these differences of 12%. This highlights the nuanced nature of fairness considerations within predictive policing frameworks.

Quantifying Fairness in Spatial Predictive Policing

Diego Hernández, Cristian Pulido, Francisco Gómez

Submitted to Artificial Intelligence and LawMajor revisions Spotlight

Predictive policing leverages data-driven models to anticipate future criminal events and guide law enforcement strategies. However, concerns about algorithmic fairness have emerged, as these models risk perpetuating discrimination and inequities, particularly among vulnerable populations. While prior research has acknowledged the influence of disparities in crime reporting levels on these models, the extent of their impact on vulnerable populations remains insufficiently understood, posing a critical challenge in societies marked by high disparities. This study seeks to quantify the fairness of three prevalent density probability estimation models used in predictive security. Specifically, it examines their capacity to distribute benefits impartially across diverse populations in various spatial contexts. Real-world theft data were employed to calibrate three distinct predictive models, followed by the quantification of model fairness through two different measurement approaches. These measurements assess disparities in the granting of model benefits between two geographical areas-one focusing on the average prediction error benefit and the other on the utilization of the model for resource allocation. Results suggest that the predictive security models studied may be fair for the prediction but unfair over the use of the model for patrol allocation, with a maximum difference between the means of groups of 45% and an average of these differences of 12%. This highlights the nuanced nature of fairness considerations within predictive policing frameworks.